The Guardrails We Refuse to Build

As AI becomes embedded in daily life, the fight is no longer about innovation, but who sets the limits.

On March 26, 2026, Judge Rita F. Lin issued a preliminary injunction blocking the Pentagon from treating Anthropic as a national security risk. The designation came after Anthropic refused to allow its AI system, Claude, to be used for certain surveillance and military purposes that the company had explicitly prohibited in its terms of use.

The ruling did not resolve the broader dispute. It did not require the Pentagon to continue using Anthropic’s technology, nor did it settle the long-term legal questions around government contracting and AI. What it did do was halt, at least temporarily, a federal response that looked less like a security determination and more like retaliation for a company drawing a line.

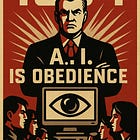

That moment, narrow as it may seem, opens into something much larger. It forces a question that the United States has not yet answered. Who gets to set the guardrails for artificial intelligence?

This Community Is Powered by You

What started as a small circle has grown into something much bigger, and it’s all because of readers like you.

Every time you forward this email, post it on socials, or bring someone new into the fold, you’re helping build one of the most passionate, independent political communities out there.

Want to keep the momentum going?

Share this newsletter with someone who should be part of this conversation.

Thank you for being here. It means everything.

When restraint becomes a liability

Anthropic’s position was not radical. The company did not reject government work outright, and it did not argue that AI should never be used in national security contexts. It simply imposed limits on how its system could be used, particularly in areas involving surveillance and autonomous decision-making tied to human harm.

Yet that act of restraint appears to have triggered consequences.

See our recent reporting here:

This is where the story becomes less about one company and more about a pattern. If a private company sets boundaries and faces pressure for doing so, that raises concerns about whether self-regulation is viable. If states attempt to set their own rules and are warned against creating a patchwork, that limits another path. If Congress has not yet passed a comprehensive federal law, then the remaining option—federal regulation—exists more in theory than in practice.

See our earlier reporting here:

Note: Articles roll into our archive over time. Consider becoming a paid subscriber for full access to our extensive catalog.

The result is not the absence of regulation. It is something more ambiguous and, in many ways, more troubling. It is a narrowing of the available paths to regulation while the technology itself continues to expand.

A technology already woven into daily life

This would be a different conversation if artificial intelligence were still confined to laboratories or specialized industries. It is not.

AI is now embedded in how students learn and how teachers evaluate. It shapes how content is created, edited, and distributed across media. It is used in workplaces to draft documents, analyze data, design campaigns, and streamline administrative work. It appears in government agencies, where it has already been used to examine contracts, evaluate programs, and inform decisions about staffing and funding. It’s use by DOGE remains controversial.

It also operates in more intimate spaces. People use AI to ask medical questions, to process personal experiences, and to navigate relationships. Some turn to it as a form of companionship, particularly in moments of isolation or distress.

This is not a hypothetical future. It is a present reality. The question is no longer whether AI will affect daily life. It already does.

The human context we keep overlooking

That reality matters even more when placed alongside the broader social landscape. Biden’s United States Surgeon General identified loneliness and social isolation as a significant public health concern. Access to mental health care remains uneven. Many people navigate complex emotional and psychological challenges with limited support.

In that context, it should not be surprising that some individuals turn to AI systems that are always available, responsive, and nonjudgmental. The appeal is not difficult to understand. What is less clear is how those interactions should be governed, particularly when users are vulnerable.

Recent lawsuits have alleged that AI chatbots contributed to self-harm or suicide. At the same time, states such as New York and California have begun to regulate so-called “companion” AI systems, requiring safeguards like crisis response protocols and referrals to support services.

These developments are not fringe concerns. They are early indicators of how a powerful technology interacts with existing human vulnerabilities.

States step in where Washington has not

In the absence of a comprehensive federal framework, states have begun to act. Their approaches vary, yet they tend to cluster around a few core concerns.

The patchwork is not theoretical. The Future of Privacy Forum reported in March that it was tracking 98 chatbot-specific bills across 34 states, along with three federal proposals, a sign of just how quickly lawmakers are trying to respond to concerns around companion AI, mental health chatbots, disclosures, and youth protections. Some laws focus on protecting minors, particularly in relation to emotionally immersive or potentially exploitative AI systems. Others address nonconsensual imagery and deepfakes, recognizing how easily AI can be used to generate harmful or deceptive content. Still others target consumer transparency and algorithmic discrimination, requiring disclosures or imposing duties of care when automated systems affect people’s lives in meaningful ways.

This is not a coordinated national strategy. It is a series of responses to specific harms as they emerge. It is also, in many ways, the predictable result of federal delay.

Washington has expressed concern about a fragmented regulatory landscape. The White House has proposed a national framework that emphasizes a minimally burdensome approach and warns against state laws that could create undue friction. That concern is not entirely unfounded. A patchwork of rules can complicate compliance and create uncertainty for companies operating across state lines.

Yet the logic breaks down without a strong federal alternative. A uniform national standard only solves the problem of fragmentation if it meaningfully addresses the harms that prompted state action in the first place. Otherwise, preemption risks removing protections without replacing them.

We have seen this before

There is a familiar pattern here. The closest comparison is not perfect, yet it is instructive.

The internet followed a similar trajectory. It was recognized early as transformative. It spread rapidly into daily life. It created new forms of communication, commerce, and community. It also introduced new risks, from cybercrime and harassment to privacy violations and the erosion of shared information environments.

Federal policy largely favored growth and innovation, often with a light regulatory touch. Over time, states stepped in to address gaps, particularly in areas like data privacy and consumer protection. Decades later, the United States still lacks a comprehensive federal framework that fully addresses the range of internet-related harms.

AI is not the internet. The technologies differ, as do their capabilities and risks. Yet the structural lesson remains relevant. When a powerful technology becomes embedded before guardrails are established, regulation becomes reactive. It becomes fragmented, contested, and often incomplete.

The cost of waiting

There is a tendency to treat delay as neutrality, as though choosing not to regulate is equivalent to leaving the field open for innovation. In reality, delay is a decision. It allows norms to form, dependencies to develop, and power to consolidate.

Once that happens, regulation becomes more difficult. It is no longer a matter of setting expectations for a new technology. It is a matter of untangling systems that people rely on, businesses that have built around it, and behaviors that have become routine.

This is where the stakes of the current moment become clear. AI is moving quickly from optional tool to embedded infrastructure. The longer guardrails are deferred, the more they will have to contend with an environment shaped without them.

What we are actually arguing about

This is not an argument against AI. It is not an argument against innovation. Most technologies bring genuine benefits. AI is no exception.

The question is not whether we should use it. The question is how we govern it.

If the United States wants to avoid a fragmented patchwork of state laws, then it needs to offer a credible alternative. That means establishing a federal baseline that addresses the harms already emerging, particularly those affecting minors, vulnerable individuals, and the integrity of information itself.

If the concern is that regulation might slow progress, then it is worth asking what kind of progress we are trying to achieve. Speed alone is not a sufficient metric. A technology that advances rapidly while creating preventable harm is not a success story. It is a warning.

The guardrails we choose not to build

There is a line often associated with the technology industry: move fast and break things. It works in environments where the costs of failure are contained.

The world is not that environment.

In public life, what breaks is not just a product. It can be trust, safety, and, in some cases, people themselves. That is why restraint is not the opposite of innovation. It is part of responsible innovation.

The lesson from past technologies is not that we should fear what is new. It is that we should take seriously the consequences of how it is deployed.

AI is already here. The question is whether we will shape it with foresight and care, or whether we will continue to treat guardrails as something to be added later, once the damage is harder to undo.

That is not a technical question. It is a choice.

We believe technology, power, and policy should be examined before the consequences are locked in. Subscribe to stay ahead of the story.

Sources:

Reuters, “US judge blocks Pentagon’s Anthropic blacklisting for now” March 26, 2026,

The White House, “National Policy Framework for Artificial Intelligence,” March 2026

Reuters, “Trump releases AI policy for Congress to pre-empt state rules,” March 20, 2026

Future of Privacy Forum, “2026 Chatbot Legislation Tracker,” March 26, 2026

Reuters, “Lawsuit says Google’s Gemini AI chatbot drove man to suicide,” March 4, 2026

Reuters, “Google, AI firm settle lawsuit over teen’s suicide linked to chatbot,” January 7, 2026

U.S. Department of Health and Human Services, “Our Epidemic of Loneliness and Isolation,” May 2023

Let’s not be lame about this… the big wigs that trot out AI are profiting enormously so much it would make a common man cry so of course they sell it as for our benefit for the good of mankind etc. Until these big wigs take the final step, fire you from your job, let you fall in debt where borrowing is intentionally made easy, put a lean on your real property, seize your assets and put you on a digital currency diet! Total control not AI freedom, can’t happen in a profit driven society… and dig, these big wigs are billionaires already!!

Aside from the environmental nightmare AI is not your friend. The billionaire tech-bro-ligarchs are literally banking on putting millions of people out of work using AI, robots and drones.