How Data Centers Use Water — and Why AI Is Making the Problem Bigger

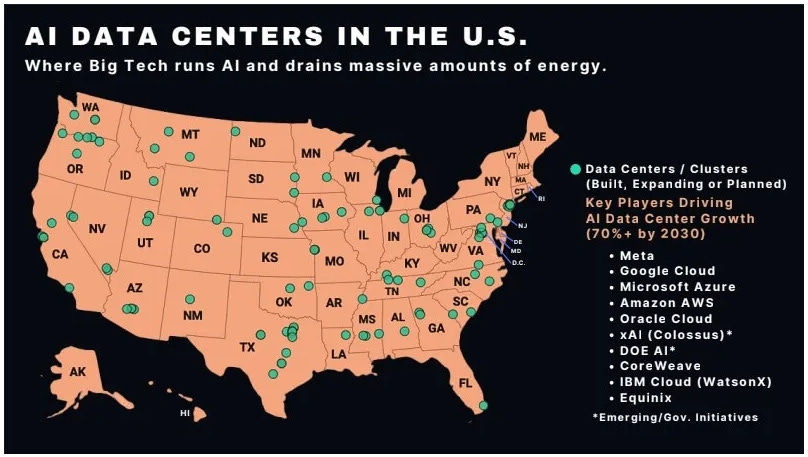

The AI boom is not just a software story. It is a physical infrastructure fight over water, electricity, public resources, and Big Tech power.

Artificial intelligence systems could consume hundreds of billions of liters of water annually, according to a widely discussed 2025 estimate that placed global AI water use between roughly 312.5 and 764.6 billion liters per year. That range is comparable to the annual water demand associated with the global bottled water industry. The findings highlight something many people still do not realize: artificial intelligence is not just a software story. It is also a story of physical infrastructure involving data centers, electricity grids, cooling systems, land use, and public resources.

The rapid growth of AI is already reshaping how communities think about energy, water, and industrial development. Large technology companies are racing to build more data centers to support chatbots, image generators, search systems, cloud computing, and increasingly complex AI models. Those facilities require enormous amounts of electricity and generate enormous amounts of heat. In many cases, water is used to keep those systems cool enough to operate safely.

AI itself is not inherently harmful. Many people see enormous potential in artificial intelligence, from medical research to scientific discovery to everyday productivity. The larger question is whether the infrastructure supporting AI will be built with the same seriousness, transparency, and public oversight that society eventually learned to apply to other large-scale systems such as electrification, highways, and telecommunications.

Image from The Cold Down

Key Takeaways

AI systems rely on data centers that often require large amounts of electricity and water.

A 2025 study estimated that AI systems could consume between 312.5 and 764.6 billion liters of water annually.

Water use comes both from cooling data centers directly and from generating the electricity that powers them.

Communities across the United States are increasingly questioning how AI infrastructure affects local water systems, utility costs, land use, and environmental quality.

The debate is not simply about technology. It is also about governance, transparency, public resources, and who bears the costs of rapid infrastructure expansion.

In This Article

Why AI uses water

What the latest studies found

Why communities are concerned

Big Tech and public resources

AI and the electrification comparison

What to watch next

Frequently asked questions

AI Feels Weightless. It Isn’t.

Artificial intelligence is often marketed as something invisible and frictionless. A person types a question into a chatbot, generates an image, or uses an automated assistant, and the interaction appears almost instantaneous. The physical systems supporting those interactions are largely hidden from public view.

Yet behind every AI system are massive facilities filled with servers, networking equipment, processors, cooling systems, backup generators, and electrical infrastructure. Those facilities consume real resources and occupy real physical space.

This reality is important because the AI boom is increasingly becoming an infrastructure story rather than simply a software story. Data centers now require enough electricity and cooling capacity to reshape local power systems, water planning, zoning decisions, and public infrastructure investment.

Earlier Coffman Chronicle reporting examined how the rapid expansion of AI infrastructure is increasingly being treated as a national strategic priority, even as questions remain about environmental oversight, governance, and long-term accountability.

The comparison to electrification is useful here.

Few people today would argue that electricity itself was a mistake. Electrification transformed modern life and produced enormous social and economic benefits. However, the expansion of electrical infrastructure also sparked major debates over power plants, dams, transmission lines, utility monopolies, environmental impacts, land use, and public regulation. Communities often fought over where infrastructure would be built, who would benefit, who would pay for it, and what safeguards should exist.

AI infrastructure appears to be entering a similar phase.

The question is not whether society should use artificial intelligence. The question is whether the infrastructure behind AI will be developed with careful planning, democratic accountability, and long-term stewardship.

Why Does AI Use Water?

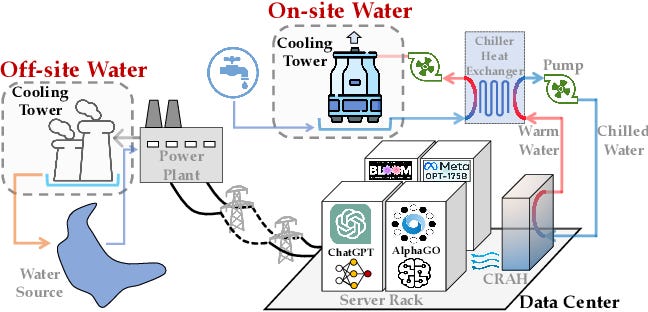

Artificial intelligence systems run on data centers, which are large facilities filled with computers and specialized processors. These processors perform the calculations required to train and operate AI models.

Those calculations generate heat.

Making AI Less “Thirsty”

If servers become too hot, they can fail or suffer performance problems. Data centers, therefore, require extensive cooling systems to continuously remove heat.

Many facilities use water-based cooling systems because water is extremely effective at absorbing and transferring heat. Some data centers use cooling towers, where water evaporates to remove heat from equipment. Others use liquid-cooling systems that circulate water or specialized fluids directly through the infrastructure.

Water use does not stop at the building itself.

Electricity generation can also consume enormous amounts of water, particularly when power comes from thermal power plants fueled by natural gas, coal, or nuclear fuel. Hydroelectric systems also involve substantial water infrastructure. This means that AI’s water footprint includes both direct water use within data centers and indirect water use associated with electricity production.

The scale of water demand depends on many factors, including:

the size of the facility,

local climate,

cooling technology,

regional electricity sources,

and how heavily the systems are used.

Facilities in hotter climates often require more intensive cooling, particularly during heat waves. Water demand can also spike during periods of high electricity demand when power grids are already under strain.

What the 2025 Study Found

One of the most widely cited estimates of AI’s environmental footprint emerged from a 2025 analysis of the growing resource demands of artificial intelligence systems and data centers.

The study estimated that AI systems could consume between approximately 312.5 and 764.6 billion liters of water annually. Researchers compared that range to the global bottled water industry’s yearly demand to help illustrate the scale involved.

The study also examined carbon emissions associated with AI infrastructure. Researchers estimated that AI-related emissions could eventually reach levels comparable to those produced by major cities if current growth trends continue.

Importantly, these numbers are estimates rather than precise measurements from every company or facility. Researchers used available infrastructure data, electricity demand projections, cooling assumptions, and modeling techniques to approximate the likely scale of AI resource use.

The exact numbers will continue evolving as technology changes, cooling systems improve, and companies build new facilities. Yet the broader conclusion remains significant: AI infrastructure is growing rapidly enough that its demands on water and electricity are becoming impossible to ignore.

Newer studies published in 2026 suggest that local infrastructure pressure may become even more urgent in the coming years.

Researchers at the University of California, Riverside and the California Institute of Technology estimated that data center cooling demand could require hundreds of millions to more than a billion gallons of additional peak water capacity per day within several years, potentially creating tens of billions of dollars in infrastructure costs.

Additional regional analyses have warned that states experiencing rapid data center expansion, including Texas, could see major increases in water demand tied to both cooling and electricity generation.

The larger point is not that every AI system consumes the same amount of water. The larger point is that the physical footprint of AI infrastructure is expanding rapidly while public understanding and regulatory systems are still catching up.

Why Location Matters

Not all data centers create the same environmental impact.

Location is one of the most important factors determining how much strain a facility places on water systems and electrical grids.

Facilities built in cooler climates may require less intensive cooling. Regions with cleaner electrical grids may produce fewer emissions associated with AI operations. Areas with stable water supplies may experience less stress than drought-prone regions already facing water shortages.

The opposite is also true.

Data centers proposed in water-stressed areas can intensify competition over limited resources, particularly during periods of extreme heat or drought. Large facilities may place additional pressure on municipal systems already serving homes, farms, hospitals, and local businesses.

These concerns are no longer theoretical.

Communities across the United States are increasingly debating new data center proposals because residents want to understand how those projects could affect water availability, electricity prices, local infrastructure, and quality of life.

The Coffman Chronicle has previously examined how AI infrastructure projects can affect local communities, public resources, and environmental systems through our reporting on data center expansion, regulatory oversight, and infrastructure planning.

Why This Matters for Regular People

The environmental impact of AI is often discussed in abstract technical language. However, the consequences are increasingly local and practical.

The environmental impact of AI infrastructure is already becoming visible in some communities. In our earlier reporting on AI data center expansion in Tennessee and Louisiana, we examined how residents raised concerns about pollution, energy demand, and environmental strain tied to large-scale computing projects.

Inside the World’s Most Powerful AI Datacenter

Local Water Systems

Large facilities can increase demand on municipal water systems, particularly during periods of extreme heat when cooling demand rises sharply.

Communities already dealing with drought conditions or aging infrastructure may face additional pressure as new industrial-scale facilities come online.

Electricity Infrastructure and Utility Costs

AI infrastructure requires enormous amounts of electricity.

Utilities may need to expand transmission systems, construct new substations, upgrade grid infrastructure, or increase generating capacity to support large data center campuses. In some cases, residents worry that portions of those costs could ultimately be passed on to ratepayers through higher utility bills.

Public Subsidies and Tax Incentives

Many communities offer tax incentives or infrastructure support to attract large technology projects.

Supporters argue that these projects can stimulate economic growth and generate local revenue. Critics question whether communities receive benefits proportional to the long-term demands placed on water systems, roads, electrical infrastructure, and public services.

In previous reporting, The Coffman Chronicle explored how resistance to large-scale AI infrastructure is growing in states like Pennsylvania, where residents have raised concerns about utility costs, land use, and the uneven distribution of benefits and burdens.

Environmental Justice Concerns

Environmental burdens are not always distributed equally.

Communities already facing pollution, industrial development, or economic disadvantage often worry that they will absorb disproportionate environmental risks associated with rapid infrastructure expansion.

The debate over AI infrastructure increasingly overlaps with broader questions about land use, public health, environmental stewardship, and democratic accountability.

Big Tech, Public Resources, and Private Profit

The central issue is not simply that artificial intelligence uses water.

Modern society depends on infrastructure-intensive systems every day. Electricity, transportation, manufacturing, telecommunications, and cloud computing all require substantial physical resources.

The larger debate concerns how costs and benefits are distributed.

The Coffman Chronicle previously examined how many data center proposals rely on vague sustainability language while avoiding binding commitments around water use, clean energy, and long-term infrastructure accountability.

Many AI systems are being developed by some of the wealthiest corporations in the world, backed by billions of dollars in investment and driven by intense competition for technological dominance. Those companies may profit enormously from AI products and services. Communities, meanwhile, may be asked to provide land, water, tax incentives, electricity infrastructure, and long-term environmental capacity to support that expansion.

That tension is becoming increasingly visible.

Residents across multiple states have raised concerns about whether communities are receiving sufficient transparency, oversight, and local benefit before large projects are approved. Questions about water use, utility strain, transmission infrastructure, pollution, and public subsidies are now appearing in zoning hearings, local elections, and state-level policy debates.

The issue is not whether AI innovation should stop. The issue is whether the public has meaningful input into how AI infrastructure is built and whether safeguards are in place before long-term commitments are made.

The Coffman Chronicle has been tracking these questions through our broader coverage of AI infrastructure, environmental accountability, and community response.

What Responsible AI Infrastructure Could Look Like

Artificial intelligence infrastructure does not have to be developed irresponsibly.

As we previously reported, many of the most important environmental decisions surrounding AI infrastructure are made before construction begins, including where facilities are located and how they will interact with local water and power systems.

Engineers, planners, and environmental researchers already understand many of the steps that can reduce environmental strain and improve long-term sustainability.

Possible approaches include:

locating facilities in regions with more stable water supplies,

using advanced cooling technologies that reduce water consumption,

improving transparency around water and electricity demand,

requiring environmental impact assessments before approval,

matching electricity demand with cleaner energy sources,

incorporating demand-response systems that reduce strain during grid emergencies,

and establishing enforceable operating conditions tied to water and energy use.

Many experts also argue that communities should receive clearer information before projects are approved, including realistic projections for electricity demand, water use, transmission infrastructure, and long-term expansion plans.

Responsible development is not anti-technology. It is an acknowledgment that large-scale infrastructure decisions have long-term consequences.

AI’s Electrification Moment

The history of electrification offers a useful comparison.

Electricity transformed society. It improved quality of life, accelerated economic development, expanded industry, and reshaped modern civilization.

Yet electrification also required major public debates about dams, transmission corridors, utility monopolies, environmental protection, land acquisition, and government oversight. Large infrastructure systems created conflicts alongside benefits.

The lesson from electrification is not that society should fear transformative technology. The lesson is that transformative infrastructure requires governance, stewardship, and public accountability.

AI infrastructure may now be entering a similar stage.

The public conversation is gradually shifting away from an abstract fascination with AI systems themselves and toward the physical systems that support them. Questions about water, electricity, land use, transmission infrastructure, taxation, and environmental oversight are becoming harder to separate from the future of artificial intelligence.

What to Watch as AI Data Centers Expand

As AI infrastructure continues growing, several issues will likely shape future public debate:

New Data Center Proposals

Communities across the United States are evaluating increasingly large data center campuses tied to AI expansion.

Water Use Permits

Questions about long-term water demand, drought planning, and cooling systems are becoming central parts of infrastructure discussions.

Electrical Grid Strain

Utilities are already examining how rapid data center growth could affect regional power systems and future generating capacity.

Public Subsidies

Tax incentives and infrastructure support agreements may face greater scrutiny as residents ask whether local benefits justify long-term costs.

Transmission Infrastructure

New transmission lines, substations, and grid upgrades may become increasingly controversial as electricity demand grows.

Transparency Requirements

Pressure is growing for clearer public reporting on water use, emissions, cooling systems, and electricity demand associated with AI infrastructure.

Local Political Pushback

Communities across multiple states are beginning to organize around questions involving land use, utility costs, environmental impact, and local decision-making authority.

Frequently Asked Questions

How much water does AI use?

A 2025 estimate suggested that AI systems could consume between approximately 312.5 and 764.6 billion liters of water annually. Exact usage varies depending on facility design, cooling systems, electricity sources, and overall computing demand.

Why does AI need water?

AI systems run on servers that generate large amounts of heat. Many data centers use water-based cooling systems to prevent overheating. Electricity generation can also indirectly consume water.

Do all data centers use water?

No. Some facilities use air cooling or alternative cooling technologies. However, many large facilities still rely heavily on water for cooling, particularly in warm climates or high-density operations.

Is AI worse than other internet services?

AI systems can require significantly more computing power than many traditional online services, particularly when training large models. Environmental impact depends on workload, infrastructure design, electricity sources, and cooling methods.

Who pays for AI’s water and energy demands?

Technology companies pay directly for many operational costs. However, communities and public utilities may also absorb indirect costs associated with infrastructure expansion, electricity generation, transmission systems, and water management.

What can communities do before approving data centers?

Communities can request detailed environmental reviews, water-use projections, electricity demand estimates, public reporting requirements, and enforceable operating conditions before projects receive approval.

The Real Question

Artificial intelligence may become one of the defining technologies of modern life. Many people believe it will transform medicine, research, communication, education, manufacturing, and scientific discovery.

That possibility makes the infrastructure behind AI more important, not less.

AI may feel digital and intangible from the user’s perspective. Yet the systems supporting it are physical, resource-intensive, and increasingly large enough to reshape communities, utilities, and environmental planning.

The question is not only what AI can do.

The question is who controls the infrastructure behind it, who profits from it, and who pays for the water, electricity, land, and public systems required to sustain it.

The Coffman Chronicle tracks how Big Tech, billionaires, corporations, and political power reshape everyday life. Subscribe to follow our coverage of AI infrastructure, monopoly power, environmental accountability, and the hidden costs of the modern tech economy.

AI will turn out to be more dangerous than nuclear weapons or the Lyin' King.